Voice authentication is insecure

It seems as voice-based user authentication is not as secure as we thought, as researchers managed to trick the machine keeping our data safe.

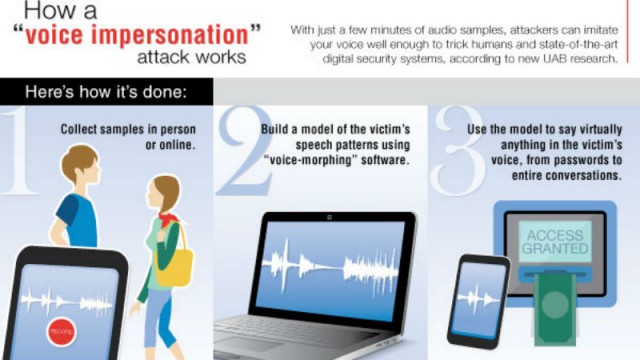

University of Alabama at Birmingham researchers have discovered that both computers and humans are vulnerable to voice impersonation attacks. Using an off-the-shelf voice-morphing tool, the researchers developed a voice impersonation attack to attempt to penetrate automated and human verification systems.

Using different snippets of a person’s voice found online and recorded out in the public, the researchers managed to trick the automated system 80 percent to 90 percent of the time.

The human systems, those that have an actual person on the other side, were somewhat more secure, failing 50 percent of the time.

"Because people rely on the use of their voices all the time, it becomes a comfortable practice", said Nitesh Saxena, Ph.D., the director of the Security and Privacy In Emerging computing and networking Systems (SPIES) lab and associate professor of computer and information sciences at UAB. "What they may not realize is that level of comfort lends itself to making the voice a vulnerable commodity. People often leave traces of their voices in many different scenarios. They may talk out loud while socializing in restaurants, giving public presentations or making phone calls, or leave voice samples online".

"Just a few minutes’ worth of audio in a victim’s voice would lead to the cloning of the victim’s voice itself", Saxena said. "The consequences of such a clone can be grave. Because voice is a characteristic unique to each person, it forms the basis of the authentication of the person, giving the attacker the keys to that person’s privacy".

The team at the University of Alabama is now researching ways to employ a defense strategy for this.

Published under license from ITProPortal.com, a Net Communities Ltd Publication. All rights reserved.